AI Under the Hood – Feb – Week 3

How AI Music Generation Systems Are Quietly Becoming Production-Grade AI music has existed for years, but for a long time it lived on the edges.Interesting

AI progress often looks chaotic from the outside. New model names appear every week, demos circulate on social media, and timelines fill with claims that everything has “changed overnight.” For anyone new to the space, this can feel overwhelming and technical in a way that seems inaccessible.

The reality is calmer and more structured than it appears.

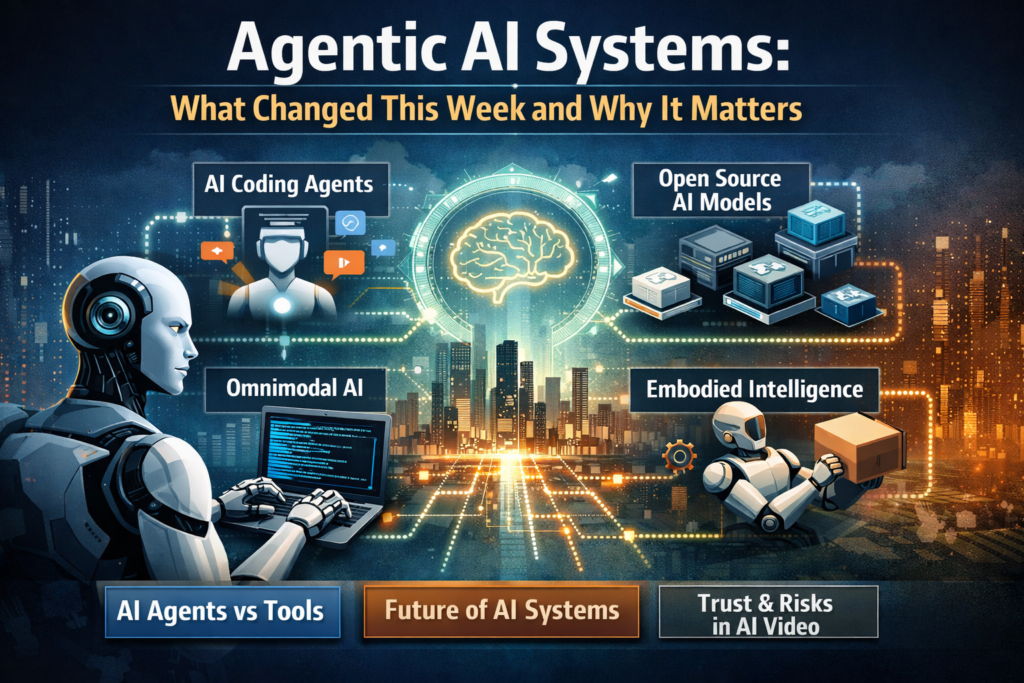

This past week did not introduce a single dramatic breakthrough. Instead, it revealed something more important. Multiple layers of AI progress are now moving forward at the same time. Agentic AI systems, AI coding agents, open source AI models for enterprise use, omnimodal interfaces, and embodied artificial intelligence are all advancing together. When that happens, the impact compounds.

You do not need to understand architectures or training methods to grasp why this matters. What is changing is not just what AI can do, but how agentic AI systems fit into real systems, teams, and products.

Agentic AI systems are designed to operate with goals, not just prompts. Earlier generations of AI tools waited for instructions, responded once, and stopped. Agentic systems behave differently. They can plan steps, execute actions, evaluate outcomes, and continue working toward an objective with minimal human input.

This shift changes how AI fits into real workflows. Instead of acting like isolated tools, agentic AI systems function more like collaborators that can operate in the background, handle multi-step tasks, and improve their own output over time. The focus moves from one-off responses to sustained progress.

Several long-running transitions crossed practical thresholds at the same time.

First, AI moved from static models toward agentic systems. These systems do more than respond. They plan, act, check their own work, and continue without constant supervision.

Second, AI expanded beyond text into omnimodal interaction. These systems can see, hear, speak, and respond across multiple sensory channels at once.

Third, attention shifted from impressive demos to deployable systems. The conversation is less about what looks good in a lab and more about what can be integrated into workflows, infrastructure, and real products.

Finally, AI is becoming embodied. Intelligence is no longer confined to a chat interface. It is increasingly connected to movement, spatial awareness, and physical interaction.

None of these shifts are entirely new on their own. What changed this week is that they are all advancing together.

Understanding the difference between AI agents and traditional tools is key to understanding this moment.

AI tools respond to direct instructions. You ask a question, get an answer, and the interaction ends. If something goes wrong, the human must step in again.

AI agents operate differently. You give them an objective instead of a task. They decide what steps are needed, execute those steps, evaluate results, and adjust their approach. This is why agentic AI systems feel less like software features and more like autonomous participants inside a system.

This distinction changes how teams work. Humans focus more on goals and decisions, while agents handle execution and iteration.

Two recent releases made this shift especially clear: Claude Opus 4.6 and GPT-5.3 Codex.

Traditional coding assistants act like smart autocomplete. You ask for a function, they generate code, and they stop. If something breaks, you prompt again.

AI coding agents behave more like junior developers. You give them an objective. They write code, run tests, debug errors, revise their output, and repeat the process until the task is complete. This persistence and self-correction is the real milestone.

This shift shows how agentic AI systems are moving from simple code assistance to autonomous collaborators inside real software development workflows. Developers spend less time on scaffolding and debugging, and more time on design decisions and system logic.

Small teams gain leverage. Larger projects move faster. The role of the developer evolves rather than disappears.

While flagship models attract attention, open source AI models made equally meaningful progress.

Systems like GLM OCR, Step 3.5 Flash, Intern S1 Pro, and Qwen 3 Coder Next demonstrate how capable open models have become. They handle document understanding, coding tasks, and reasoning workloads that once required large proprietary systems.

What stands out is efficiency. These models are optimized to run faster, cost less, and deploy more easily on private infrastructure. This matters for enterprises that care about control, compliance, and reliability.

Platforms like Hugging Face show how open source AI models are being adopted by enterprises for secure and controllable deployments. Innovation is no longer centralized. Competitive performance is emerging across ecosystems.

Omnimodal AI systems process multiple types of input and output at the same time. They can look at images, listen to speech, respond with voice, and adapt in real time.

This matters because humans do not communicate through text alone. We speak, gesture, observe, and react simultaneously. Omnimodal systems bring AI closer to natural human interaction.

Projects like MiniCPM o4.5 and Interact Avatar point toward new product categories. Training systems that respond to voice and movement. Assistants that operate in physical environments. Interfaces that feel less like software and more like interaction.

The intelligence itself is only part of the story. The interface is becoming just as important.

Another important signal is the rise of embodied artificial intelligence.

Research such as 3DiMo and FastVMT is improving how AI understands 3D environments and motion. This is essential for robotics, spatial reasoning, and real-world interaction.

Projects like InterPrior connect reasoning with physical movement, narrowing the gap between digital intelligence and physical action. Even research that seems playful, such as Paper Banana, reflects a deeper trend. Models are learning to interpret intent and context, not just literal input.

Embodiment grounds intelligence in the physical world, where uncertainty, constraints, and consequences exist.

Progress also introduces risk.

Tools like EditYourself show how convincingly AI can now manipulate speech and lip-sync in video. The key change is accessibility. High-quality manipulation is becoming easier and cheaper to produce.

This challenges the assumption that video is inherently trustworthy. For society, this raises questions about media credibility. For businesses, it creates risks around brand reputation and misinformation.

The response cannot be panic or rejection. It must be awareness and adaptation. Verification systems, disclosure standards, and internal safeguards will matter more as these tools spread.

This week did not deliver a single headline moment. It delivered convergence.

Agents are becoming more useful than tools. Systems are becoming more important than demos. Interaction is starting to matter as much as raw intelligence.

For founders, CTOs, and operators, the question is no longer whether AI is capable. It is how agentic AI systems fit together and where they can be trusted to operate autonomously.

The future of agentic AI systems will depend on how well they integrate into real workflows, physical environments, and organizational decision-making.

At Nerobyte, we help teams apply agentic AI systems through practical AI solutions and digital marketing strategies. Our role is not to amplify hype, but to interpret signals, connect patterns, and help teams understand what actually changes their decisions.

The pace will continue. The noise will grow. The advantage will belong to those who stay calm, grounded, and focused on the deeper shifts underneath.

How AI Music Generation Systems Are Quietly Becoming Production-Grade AI music has existed for years, but for a long time it lived on the edges.Interesting

How Kling 3.0 Signals a Shift in AI Video Generation Systems Edition: Feb 26 · Week 2By Nerobyte Technologies AI video has spent the last

© 2024 All rights reserved